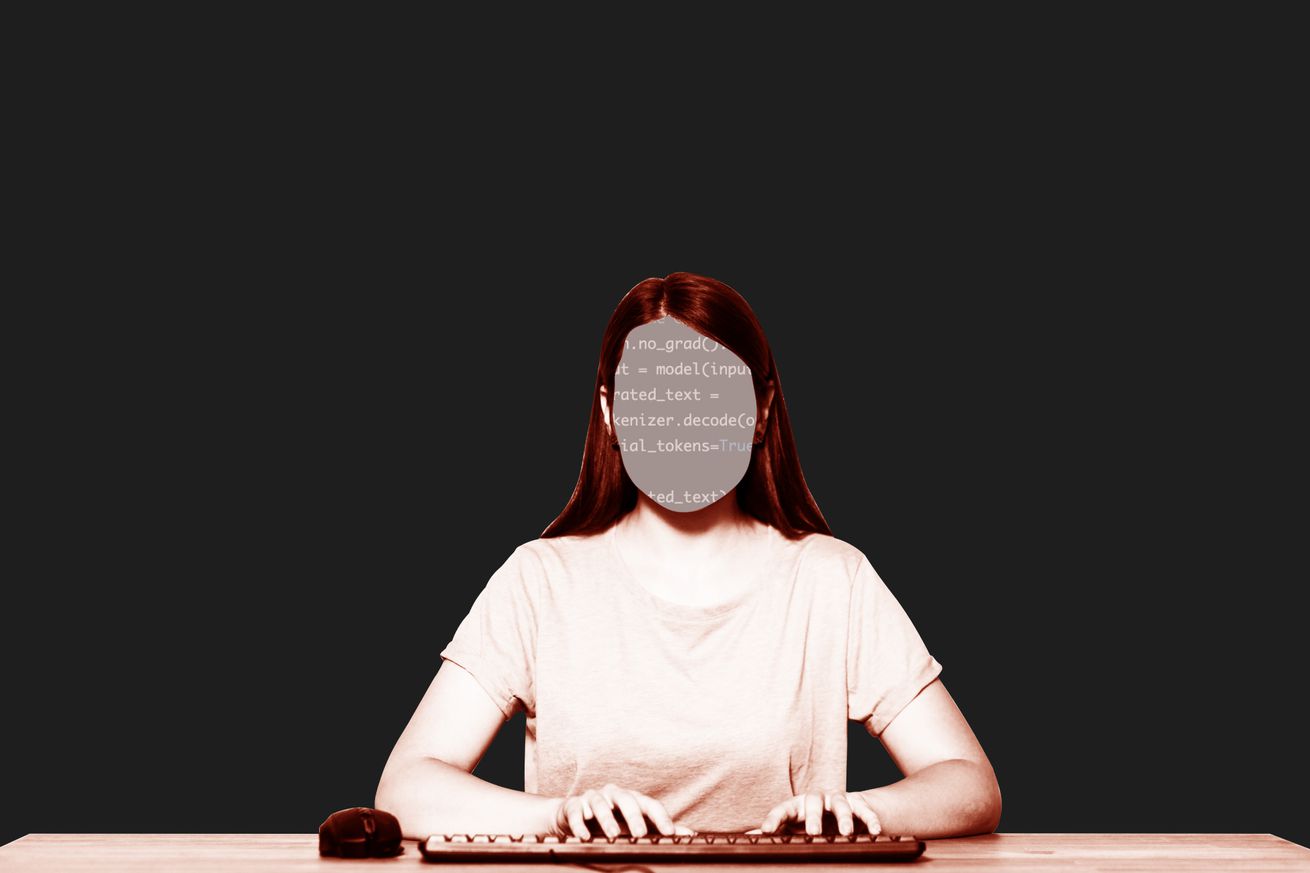

A few months ago, my doctor showed off an AI transcription tool he used to record and summarize his patient meetings. In my case, the summary was fine, but researchers cited by ABC News have found that’s not always the case with OpenAI’s Whisper, which powers a tool many hospitals use — sometimes it just makes things up entirely.

Whisper is used by a company called Nabla for a medical transcription tool that it estimates has transcribed 7 million medical conversations, according to ABC News. More than 30,000 clinicians and 40 health systems use it, the outlet writes. Nabla is reportedly aware that Whisper can hallucinate, and is “addressing the problem.”

A group of researchers from Cornell University, the University of Washington, and…